Open data, open code, open question

We've built world-class open data and promising open tools. But a theory about frictionless reproducibility suggests the Earth observation community is still missing the ingredient that turns infrastructure into acceleration.

Last week, during our Development Seed team week, our CEO Ian Schuler mentioned a paper in his state-of-the-company talk. A dense, fifty-page academic piece by Stanford statistician David Donoho, called Data Science at the Singularity. Ian kept coming back to one phrase: frictionless reproducibility. I must admit, in the moment, it didn't quite land. Or rather, it landed somewhere familiar. My mind split the phrase in two: reproducibility went straight to scientific rigour, peer review, replicable experiments; frictionless went to open source, open data, performance, things I've been working with for years. I thought "yes, of course, we all agree on that." I moved on.

Later that week, chatting with Ian as we were wrapping up the team week, he actually shared the article. I skimmed it. The language was heavy, the footnotes relentless, and I put it aside. It was only this week that I sat down properly with it, and something shifted. What Donoho describes isn't reproducibility as a virtue. It's reproducibility as a mechanism, a structural condition that, when met, triggers an acceleration in scientific progress so dramatic he calls it a singularity. Not the AI singularity everyone keeps worrying about, but something quieter and far more consequential.

His argument is deceptively simple. All those breathtaking advances we've been hearing about, large language models, protein structure prediction, computer vision, they're not primarily the result of some mysterious AI superpower. They're the consequence of three very specific practices finally coming together, after decades of slow maturation, into what he calls Frictionless Reproducibility.

Three practices. That's it. And the interesting part, for those of us working in Earth observation, is that we might be closer to this threshold than we think, and further from it than we'd like to admit.

This post is a bit different from the usual fare. It's less about a specific technology and more about a framework that I think deserves attention from our community. Bear with me through the theory, because the payoff, I believe, is worth it.

Three pillars, one threshold

Donoho's framework rests on three pillars, each necessary, none sufficient alone:

Data sharing (FR-1): not just making data available in principle, but offering frictionless, programmatic, single-click access to standardised datasets. No registration forms, no approval committees, no waiting. One line of code and you have the data.

Code sharing and re-execution (FR-2): not just publishing your script on GitHub, but enabling someone to reproduce your entire workflow, end to end, within minutes of discovering your work. The full pipeline, the environment, the dependencies, everything needed to go from raw input to published result.

Challenges with standardised metrics (FR-3): and this is perhaps the least intuitive of the three. Formalised problems where competing teams work on the same dataset, scored by the same metric, ranked on a public leaderboard. A shared definition of what "better" actually means.

Donoho's central claim is that when all three come together as frictionless services, when any researcher anywhere can access the data, re-run the code, and measure their improvement against a common benchmark, something extraordinary happens. A kind of chain reaction. He calls it a Frictionless Research Exchange: researchers enter a tight loop of reproducing, tweaking, improving, and sharing, and the pace of progress accelerates dramatically.

The fields where this triad is most mature, empirical machine learning in particular, are precisely the ones producing the advances that get mistaken for "AI magic." It's not magic. It's infrastructure.

How sorting mail changed everything

The concept might sound abstract, so let me borrow an example that made it click for me.

In the late 1980s, the US Postal Service had a very concrete problem: reading handwritten zip codes on millions of envelopes a day. Every misread digit meant a letter going to the wrong sorting centre. At Bell Labs, researcher Isabelle Guyon and her colleagues, including Yann LeCun, collected thousands of handwritten digits from postal mail, cleaned them, standardised them into small 28x28 pixel images, and published them as a dataset called MNIST. Then they said, essentially: here are 60,000 training images and 10,000 test images. The task is classification. The metric is error rate. Show us what you've got.

Same data. Same metric. Same task. No ambiguity about what "better" means.

What happened next is what Donoho finds so revealing. Over the following decades, researchers kept coming back to MNIST. Error rates dropped from around 12% to below 0.5%. Each improvement was precisely measurable because the dataset and metric never changed. The tight loop that Donoho describes, reproduce, tweak, improve, share, was running at full speed. And it worked because the challenge wasn't framed as an abstract technical puzzle. It was framed around a real operational need: can your algorithm read what a human wrote on an envelope?

From there, the same techniques and community momentum flowed into bank cheque reading, document digitisation, license plate recognition, and eventually the broader computer vision revolution that now powers everything from medical imaging to satellite image classification. Guyon herself went on to build ChaLearn, a platform for hosting prediction challenges across many domains, making the framework itself reproducible. A similar pattern played out in biology, where the CASP protein structure prediction challenge ran for three decades before DeepMind's AlphaFold breakthrough, and is now reshaping drug discovery.

The pattern is always the same: define the problem around a real need, agree on the metric, share the data, and let the community iterate. The acceleration follows.

Where does Earth observation stand?

Reading all this, I couldn't help looking at our own field through Donoho's lens. Not to grade ourselves, but to understand which parts of this machinery we've already built, perhaps without realising it, and where the scheme could take us further.

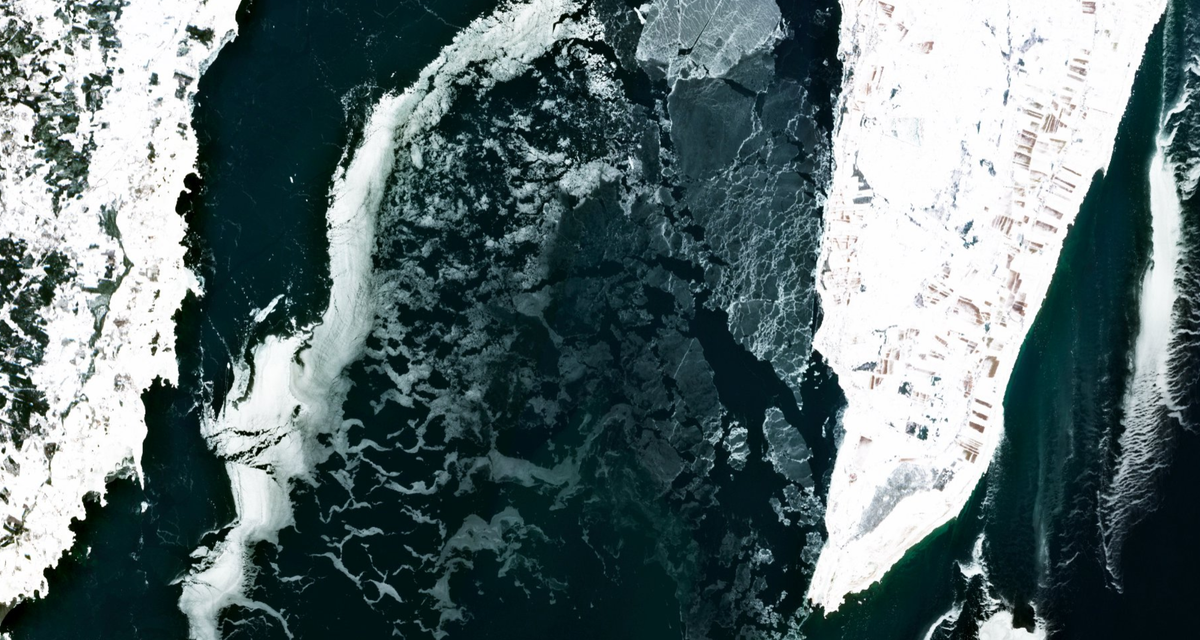

Data sharing: remarkably strong. This is where Earth observation genuinely shines, perhaps more than almost any other scientific domain. The Copernicus programme alone represents one of the most ambitious open data policies ever implemented. Sentinel-1, Sentinel-2, Sentinel-3: petabytes of systematically acquired, freely accessible, globally consistent observations. Add Landsat's open archive, the proliferation of STAC catalogues, cloud-native formats like COG and Zarr, and planetary-scale platforms like the Copernicus Data Space Ecosystem, and you have an FR-1 implementation that would make most scientific fields envious. We don't just share data, we've built an entire ecosystem around making it frictionlessly discoverable and accessible.

Code sharing and re-execution: developing, but far from frictionless. This is where things get more nuanced. Yes, there are repositories full of processing scripts, and containerisation is making it easier to package environments reproducibly. But be honest with yourself: if a colleague published an interesting result using an InSAR processing chain, could you reproduce their entire workflow, from raw SLC to final displacement map, in a single afternoon? In most cases, the answer is no. There are too many undocumented steps, too many environment-specific dependencies, too many implicit choices buried in parameter files that never made it into the paper. We're somewhere between what Donoho calls "In-Principle Reproducibility" and true frictionless re-execution, and the gap matters more than we might think. The building blocks exist, whether standardised processing APIs like openEO, community-driven tool ecosystems like Pangeo, or infrastructure patterns like containerisation, but they address different parts of the problem and the community hasn't converged on a coherent stack that would make re-execution truly frictionless.

Challenges with standardised metrics: largely absent. And this, I suspect, is the real story. Where is our MNIST? Where is the formalised, recurring challenge that says: here is a well-defined problem, here is the dataset, here is exactly how we measure success, now show us what you've got?

There are fragments, certainly. The IEEE GRSS Data Fusion Contests have run for years with shared datasets and ranked submissions, and initiatives like SpaceNet have done something similar for building footprint extraction and road network mapping. These are valuable, and they follow the same pattern quite closely. But they tend to focus on classification and detection tasks, the kinds of problems that map neatly onto standard machine learning benchmarks.

What about the harder, more domain-specific challenges? Consider phase unwrapping in SAR interferometry, a problem the community has wrestled with for decades. When a radar satellite measures ground displacement, it doesn't give you a direct distance. It gives you a phase measurement that wraps around every few centimetres, a bit like a clock that resets to zero after twelve. Recovering the actual displacement from these wrapped measurements is the unwrapping problem, and it breaks down exactly where you need it most: steep terrain, fast-moving landslides, vegetated areas where the signal decorrelates.

Multiple approaches exist, and researchers and implementers alike do compare them, in papers, in toolchains, in internal benchmarks. But these comparisons are always local: someone's choice of methods, someone's test cases, someone's implementation. The results are frozen at the moment of publication or deployment. If a better approach appears next year, there's no systematic way to measure its improvement against everything that came before.

Now imagine the FR-3 version. A public, permanent test dataset. A fixed metric. Anyone, researcher or engineer, can submit their method at any time and see exactly where it ranks. Each team implements and optimises their own approach. The leaderboard is alive, accumulating results over years. And because FR-2 requires code sharing, every submission is inspectable, so the community can learn why one method outperforms another, not just that it does. The comparison becomes a living process, not a frozen snapshot.

So close, and yet

If Donoho's theory holds, and the evidence from empirical machine learning, computer vision, and natural language processing suggests it does, then Earth observation is sitting on a remarkable foundation. Our FR-1 is world-class. Our FR-2 is moving in the right direction. But the missing third ingredient might be exactly what's preventing the kind of explosive, compounding progress that other fields are experiencing.

This isn't a criticism. It's actually quite an optimistic observation, because it suggests that the bottleneck isn't data (we have it), and it isn't talent (we have that too). It's a structural question about how the community organises itself to measure and drive progress.

Why are we doing all this?

But here's what's been nagging at me since I put Donoho's paper down, and it's something I didn't fully grasp at first. I kept trying to think of FR-3 challenges in purely technical terms: can we unwrap phase more accurately, can we correct atmospheric delays more efficiently? And each time, something felt incomplete. Because FR-3 isn't just a benchmarking exercise. It's a question of purpose.

Think about why MNIST worked so well. The question "can your algorithm read a handwritten zip code?" wasn't interesting because it's a clever technical puzzle. It was interesting because the postal service needed the answer. The challenge embodied a real purpose. The metric measured what ultimately mattered.

So when I try to imagine an FR-3 for Earth observation, I find myself asking a more uncomfortable question: why are we doing all this? All the data sharing, all the processing infrastructure, all the workflows, what's the decision at the end of the chain? "Can your algorithm detect that a village is at risk of subsidence before the damage occurs?" is a fundamentally different challenge from "can your algorithm unwrap phase with fewer errors?" The first one structures the problem around the outcome that matters. The second optimises a technical step that most decision-makers will never see.

Aravind Ravichandran writes compellingly about Earth observation needing to become an "invisible indispensable", the way GPS vanished into the background of how we navigate, the way weather forecasting dissolved into how we plan our days. I wonder whether EO remains visible and dispensable precisely because we haven't structured our challenges around outcomes that matter beyond our own community. We optimise for metrics that make sense to engineers and scientists, not for the questions that would make our technology disappear into the fabric of how societies function.

And perhaps that's what FR-3 is really asking us to do. Not just "measure your algorithm against mine," but "prove our field's value in terms that the world actually cares about." Define the challenge, agree on the metric, publish the leaderboard, and let the tight loop of improvement begin.

I don't have answers to these questions yet. But I have a feeling they point somewhere important, and I suspect some of you have been circling the same territory. In the coming posts, I'd like to explore what the ideal EO workflow framework might look like, the kind that would make true re-execution frictionless, and whether there are lessons from other fields we haven't yet borrowed.

Because if Donoho is right, the ingredients are mostly there. We might just need to agree on what we're cooking.

Follow Seeing Earth

New posts straight to your inbox.